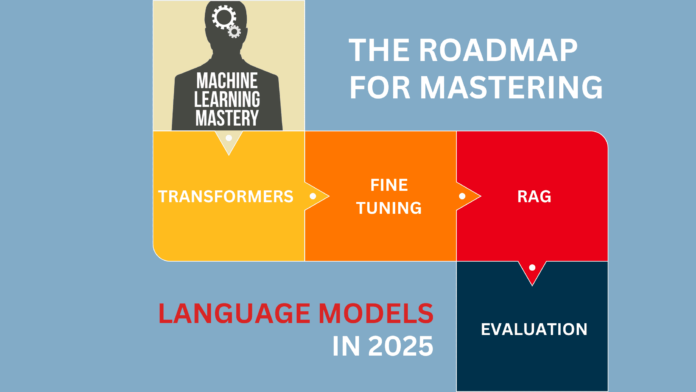

The Roadmap for Mastering Language Models in 2025

Image by Editor | Midjourney

Large language models (LLMs) are a big step forward in artificial intelligence. They can predict and generate text that sounds like it was written by a human. LLMs learn the rules of language, like grammar and meaning, which allows them to perform many tasks. They can answer questions, summarize long texts, and even create stories. The growing need for automatically generated and organized content is driving the expansion of the large language model market. According to one report, Large Language Model (LLM) Market Size & Forecast:

“The global LLM Market is currently witnessing robust growth, with estimates indicating a substantial increase in market size. Projections suggest a notable expansion in market value, from USD 6.4 billion in 2024 to USD 36.1 billion by 2030, reflecting a substantial CAGR of 33.2% over the forecast period”

This means 2025 might be the best year to start learning LLMs. Learning advanced concepts of LLMs includes a structured, stepwise approach that includes concepts, models, training, and optimization as well as deployment and advanced retrieval methods. This roadmap presents a step-by-step method to gain expertise in LLMs. So, let’s get started.

Step 1: Cover the Fundamentals

You can skip this step if you already know the basics of programming, machine learning, and natural language processing. However, if you are new to these concepts consider learning them from the following resources:

- Programming: You need to learn the basics of programming in Python, the most popular programming language for machine learning. These resources can help you learn Python:

Learn Python – Full Course for Beginners [Tutorial] – YouTube (Recommended)

Python Crash Course For Beginners – YouTube

TEXTBOOK: Learn Python The Hard Way - Machine Learning: After you learn programming, you have to cover the basic concepts of machine learning before moving on with LLMs. The key here is to focus on concepts like supervised vs. unsupervised learning, regression, classification, clustering, and model evaluation. The best course I found to learn the basics of ML is:

Machine Learning Specialization by Andrew Ng | Coursera

It is a paid course that you can buy in case you need a certification, but fortunately, I have found it on YouTube for free too:

Machine Learning by Professor Andrew Ng - Natural Language Processing: It is very important to learn the fundamental topics of NLP if you want to learn LLMs. Focus on the key concepts: tokenization, word embeddings, attention mechanisms, etc. I have given a few resources that might help you learn NLP:

Coursera: DeepLearning.AI Natural Language Processing Specialization – Focuses on NLP techniques and applications (Recommended)

Stanford CS224n (YouTube): Natural Language Processing with Deep Learning – A comprehensive lecture series on NLP with deep learning.

Step 2: Understand Core Architectures Behind Large Language Models

Large language models rely on various architectures, with transformers being the most prominent foundation. Understanding these different architectural approaches is essential for working effectively with modern LLMs. Here are the key topics and resources to enhance your understanding:

- Understand transformer architecture and emphasize on understanding self-attention, multi-head attention, and positional encoding.

- Start with Attention Is All You Need, then explore different architectural variants: decoder-only models (GPT series), encoder-only models (BERT), and encoder-decoder models (T5, BART).

- Use libraries like Hugging Face’s Transformers to access and implement various model architectures.

- Practice fine-tuning different architectures for specific tasks like classification, generation, and summarization.

Recommended Learning Resources

Step 3: Specializing in Large Language Models

With the basics in place, it’s time to focus specifically on LLMs. These courses are designed to deepen your understanding of their architecture, ethical implications, and real-world applications:

- LLM University – Cohere (Recommended): Offers both a sequential track for newcomers and a non-sequential, application-driven path for seasoned professionals. It provides a structured exploration of both the theoretical and practical aspects of LLMs.

- Stanford CS324: Large Language Models (Recommended): A comprehensive course exploring the theory, ethics, and hands-on practice of LLMs. You will learn how to build and evaluate LLMs.

- Maxime Labonne Guide (Recommended): This guide provides a clear roadmap for two career paths: LLM Scientist and LLM Engineer. The LLM Scientist path is for those who want to build advanced language models using the latest techniques. The LLM Engineer path focuses on creating and deploying applications that use LLMs. It also includes The LLM Engineer’s Handbook, which takes you step by step from designing to launching LLM-based applications.

- Princeton COS597G: Understanding Large Language Models: A graduate-level course that covers models like BERT, GPT, T5, and more. It is Ideal for those aiming to engage in deep technical research, this course explores both the capabilities and limitations of LLMs.

- Fine Tuning LLM Models – Generative AI Course When working with LLMs, you will often need to fine-tune LLMs, so consider learning efficient fine-tuning techniques such as LoRA and QLoRA, as well as model quantization techniques. These approaches can help reduce model size and computational requirements while maintaining performance. This course will teach you fine-tuning using QLoRA and LoRA, as well as Quantization using LLama2, Gradient, and the Google Gemma model.

- Finetune LLMs to teach them ANYTHING with Huggingface and Pytorch | Step-by-step tutorial: It provides a comprehensive guide on fine-tuning LLMs using Hugging Face and PyTorch. It covers the entire process, from data preparation to model training and evaluation, enabling viewers to adapt LLMs for specific tasks or domains.

Step 4: Build, Deploy & Operationalize LLM Applications

Learning a concept theoretically is one thing; applying it practically is another. The former strengthens your understanding of fundamental ideas, while the latter enables you to translate those concepts into real-world solutions. This section focuses on integrating large language models into projects using popular frameworks, APIs, and best practices for deploying and managing LLMs in production and local environments. By mastering these tools, you’ll efficiently build applications, scale deployments, and implement LLMOps strategies for monitoring, optimization, and maintenance.

- Application Development: Learn how to integrate LLMs into user-facing applications or services.

- LangChain: LangChain is the fast and efficient framework for LLM projects. Learn how to build applications using LangChain.

- API Integrations: Explore how to connect various APIs, like OpenAI’s, to add advanced features to your projects.

- Local LLM Deployment: Learn to set up and run LLMs on your local machine.

- LLMOps Practices: Learn the methodologies for deploying, monitoring, and maintaining LLMs in production environments.

Recommended Learning Resources & Projects

Building LLM applications:

Local LLM Deployment:

Containerizing LLM-Powered Apps: Chatbot Deployment – A step-by-step guide to deploying local LLMs with Docker.

Deploying & Managing LLM applications In Production Environments:

GitHub Repositories:

- Awesome-LLM: It is a curated collection of papers, frameworks, tools, courses, tutorials, and resources focused on large language models (LLMs), with a special emphasis on ChatGPT.

- Awesome-langchain: This repository is the hub to track initiatives and projects related to LangChain’s ecosystem.

Step 5: RAG & Vector Databases

Retrieval-Augmented Generation (RAG) is a hybrid approach that combines information retrieval with text generation. Instead of relying only on pre-trained knowledge, RAG retrieves relevant documents from external sources before generating responses. This improves accuracy, reduces hallucinations, and makes models more useful for knowledge-intensive tasks.

- Understand RAG & its Architectures (e.g., standard RAG, Hierarchical RAG, Hybrid RAG)

- Vector Databases – Understand how to implement vector databases with RAG. Vector databases store and retrieve information based on semantic meaning rather than exact keyword matches. This makes them ideal for RAG-based applications as these allow for fast and efficient retrieval of relevant documents.

- Retrieval Strategies – Implement dense retrieval, sparse retrieval, and hybrid search for better document matching.

- LlamaIndex & LangChain – Learn how these frameworks facilitate RAG.

- Scaling RAG for Enterprise Applications – Understand distributed retrieval, caching, and latency optimizations for handling large-scale document retrieval.

Recommended Learning Resources & Projects

Basic Foundational courses:

Advanced RAG Architectures & Implementations:

Enterprise-Grade RAG & Scaling:

Step 6: Optimize LLM Inference

Optimizing inference is crucial for making LLM-powered applications efficient, cost-effective, and scalable. This step focuses on techniques to reduce latency, improve response times, and minimize computational overhead.

Key Topics

- Model Quantization: Reduce model size and improve speed using techniques like 8-bit and 4-bit quantization (e.g., GPTQ, AWQ).

- Efficient Serving: Deploy models efficiently with frameworks like vLLM, TGI (Text Generation Inference), and DeepSpeed.

- LoRA & QLoRA: Use parameter-efficient fine-tuning methods to enhance model performance without high resource costs.

- Batching & Caching: Optimize API calls and memory usage with batch processing and caching strategies.

- On-Device Inference: Run LLMs on edge devices using tools like GGUF (for llama.cpp) and optimized runtimes like ONNX and TensorRT.

Recommended Learning Resources

- Efficiently Serving LLMs – Coursera – A guided project on optimizing and deploying large language models efficiently for real-world applications.

- Mastering LLM Inference Optimization: From Theory to Cost-Effective Deployment – YouTube – A tutorial discussing the challenges and solutions in LLM inference. It focuses on scalability, performance, and cost management. (Recommended)

- MIT 6.5940 Fall 2024 TinyML and Efficient Deep Learning Computing – It covers model compression, quantization, and optimization techniques to deploy deep learning models efficiently on resource-constrained devices. (Recommended)

- Inference Optimization Tutorial (KDD) – Making Models Run Faster – YouTube – A tutorial from the Amazon AWS team on methods to accelerate LLM runtime performance.

- Large Language Model inference with ONNX Runtime (Kunal Vaishnavi) – A guide on optimizing LLM inference using ONNX Runtime for faster and more efficient execution.

- Run Llama 2 Locally On CPU without GPU GGUF Quantized Models Colab Notebook Demo – A step-by-step tutorial on running LLaMA 2 models locally on a CPU using GGUF quantization.

- Tutorial on LLM Quantization w/ QLoRA, GPTQ and Llamacpp, LLama 2 – Covers various quantization techniques like QLoRA and GPTQ.

- Inference, Serving, PagedAtttention and vLLM – Explains inference optimization techniques, including PagedAttention and vLLM, to speed up LLM serving.

Wrapping Up

This guide covers a comprehensive roadmap to learning and mastering LLMs in 2025. I know it might seem overwhelming at first, but trust me — if you follow this step-by-step approach, you’ll cover everything in no time. If you have any questions or need more help, do comment.